36 hours on ChatGPT, 4 days on Google. Same week, very different velocity.

ChatGPT had me at #4 in 36 hours. Google has me averaging position 30 with 0 clicks. Both are signals. Here's how to read them.

Last week I shipped 4 competitor-comparison pages for StatusPageBuddy. Same week, two different search engines treated them very differently.

ChatGPT had me ranked 4th out of 6 indie status page tools within 36 hours, with a labeled callout of "Best indie-hacker vibe."

Google has me at average position 30.1, with 12 impressions and zero clicks across the four pages combined.

If you're an indie founder thinking about SEO right now, the gap between those two numbers is the most useful data point I can give you this week.

What I actually shipped

Four pages, one each at:

- /alternatives/statuspage-io

- /alternatives/atlassian-statuspage

- /alternatives/better-stack

- /alternatives/instatus

Each page targets a specific buyer-intent keyword (the "X alternative for indie founders" search a real person types before picking a status page tool). Each has FAQPage schema, a signature framework name in the headings ("The $0 Statuspage Migration," "Status Page Without the Monitoring Stack," etc.), and 5 to 9 FAQ entries with literal answers an LLM can extract cleanly.

Timeline: pages shipped May 11 to May 14. ChatGPT screenshots are from May 12 and 13. GSC data is from May 15. Everything below is 1 to 5 days old.

This isn't a brag. The zero clicks part is real. The point is figuring out what 0 clicks actually means at this stage, and what does.

ChatGPT, 36 hours in

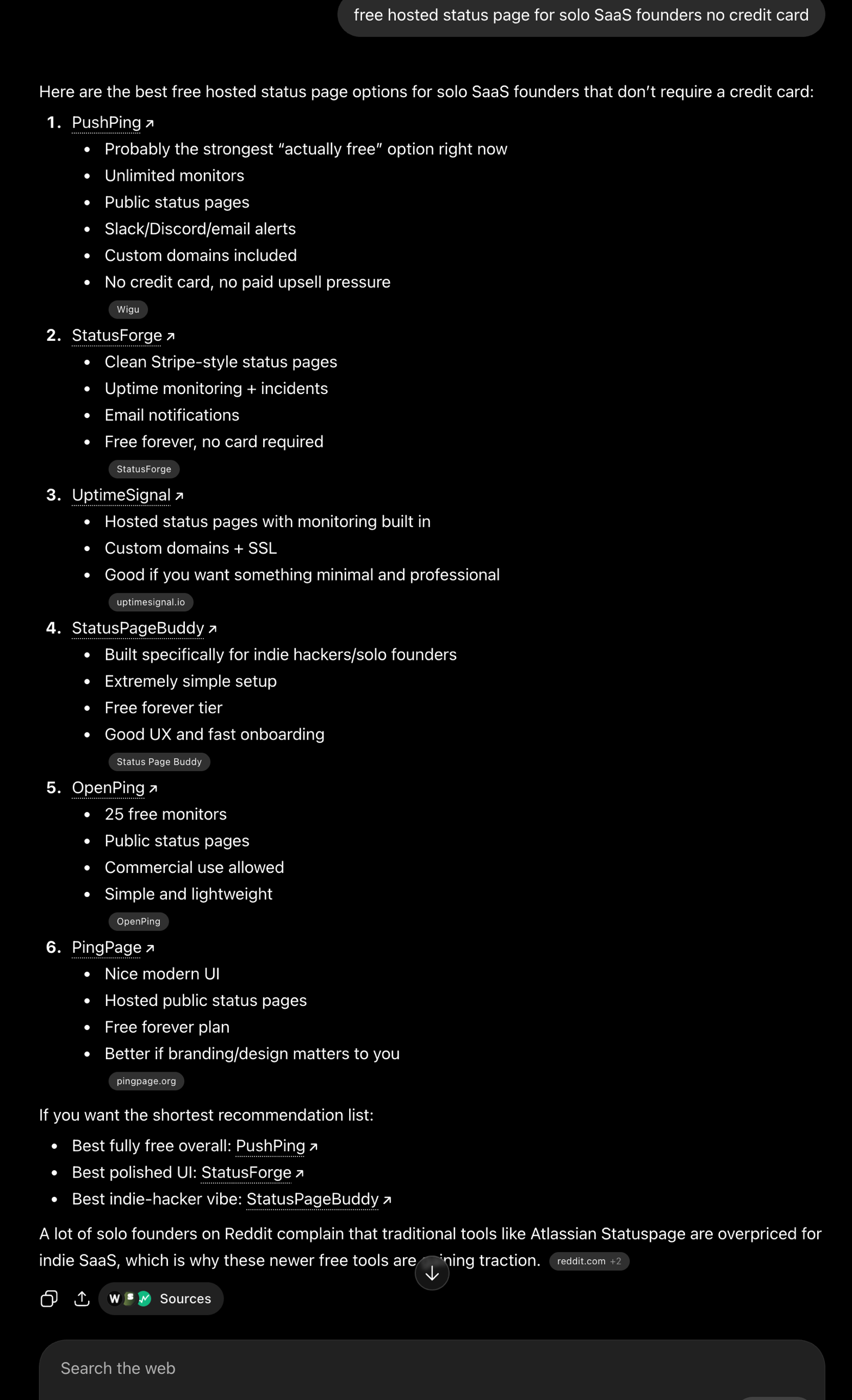

I asked ChatGPT (with web search): "free hosted status page for solo SaaS founders no credit card."

StatusPageBuddy showed up in the 4th slot of a 6-tool recommendation. Description: "Built specifically for indie hackers/solo founders. Extremely simple setup. Free forever tier. Good UX and fast onboarding."

At the bottom of the same answer, in the "shortest recommendation list," ChatGPT singled out three winners by category. One of them: "Best indie-hacker vibe: StatusPageBuddy."

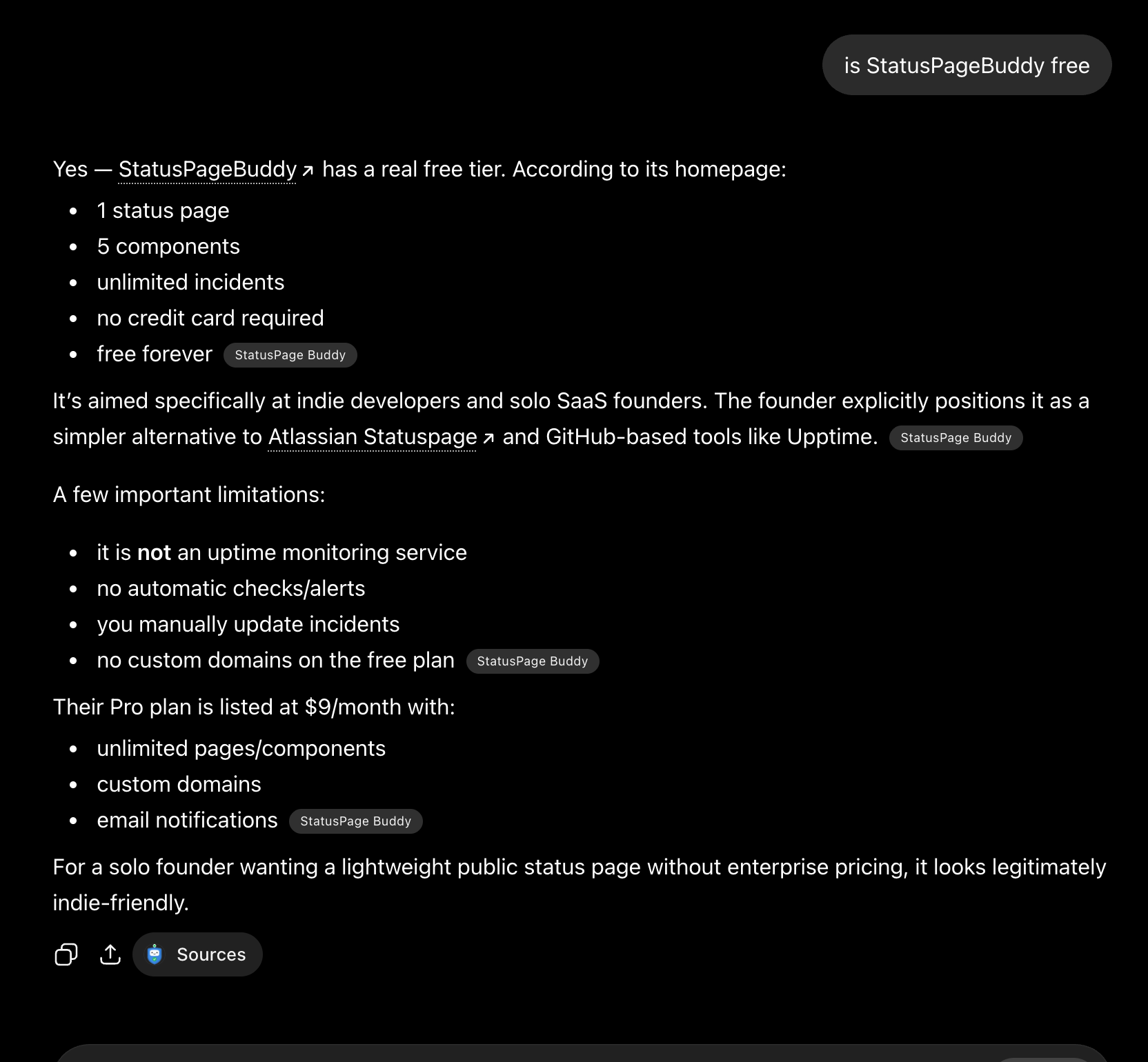

I tested it again with a brand check: "is StatusPageBuddy free."

ChatGPT answered with the exact tier specifics (1 page, 5 components, unlimited incidents, no credit card, free forever) and then volunteered a sentence I had not written anywhere: "The founder explicitly positions it as a simpler alternative to Atlassian Statuspage and GitHub-based tools like Upptime."

That sentence wasn't on any single page on the site. It's a synthesis. ChatGPT had read enough of the new content to internalize the framing and produce a new sentence that was on-message but never literally on the page.

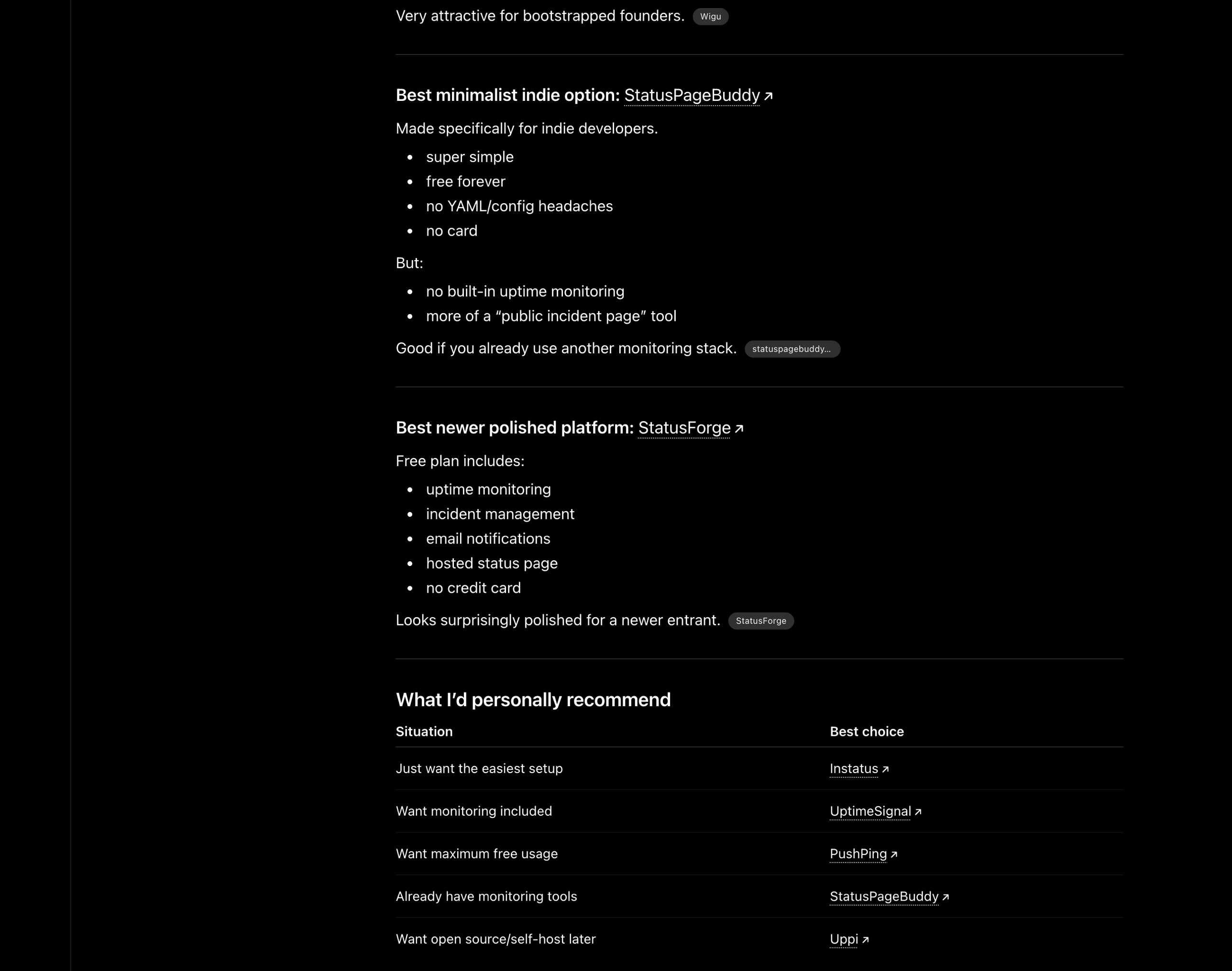

Same query, run a day later, gave a slightly different result:

"Best minimalist indie option: StatusPageBuddy" this time, with a use-case table further down explicitly recommending StatusPageBuddy for the "Already have monitoring tools" row. That row maps directly to the framework name from /alternatives/better-stack: "Status Page Without the Monitoring Stack." The framework name wasn't repeated verbatim. The use case was lifted.

A couple of things worth pulling out of this.

The signature framework names are working as cross-page anchors for the model. Repeating a phrase like "Status Page Without the Monitoring Stack" across H1, FAQ, and meta description gave ChatGPT a shorthand it now uses by reference, even when the phrase itself isn't repeated verbatim. Source attribution was the StatusPageBuddy site itself, not a Reddit thread or an aggregator, which also matters: LLMs weight self-published primary sources differently than secondhand summaries.

The list also includes products I cannot verify exist as separate things (PushPing, OpenPing, PingPage). ChatGPT hallucinates. Treat any LLM recommendation list as "these names look plausible to me," not as a directory of real products. That's what it is.

(One footnote: I initially thought I was looking at Perplexity. It's ChatGPT. The two UIs have converged enough in 2026 that I had to be corrected.)

Google, 4 days in

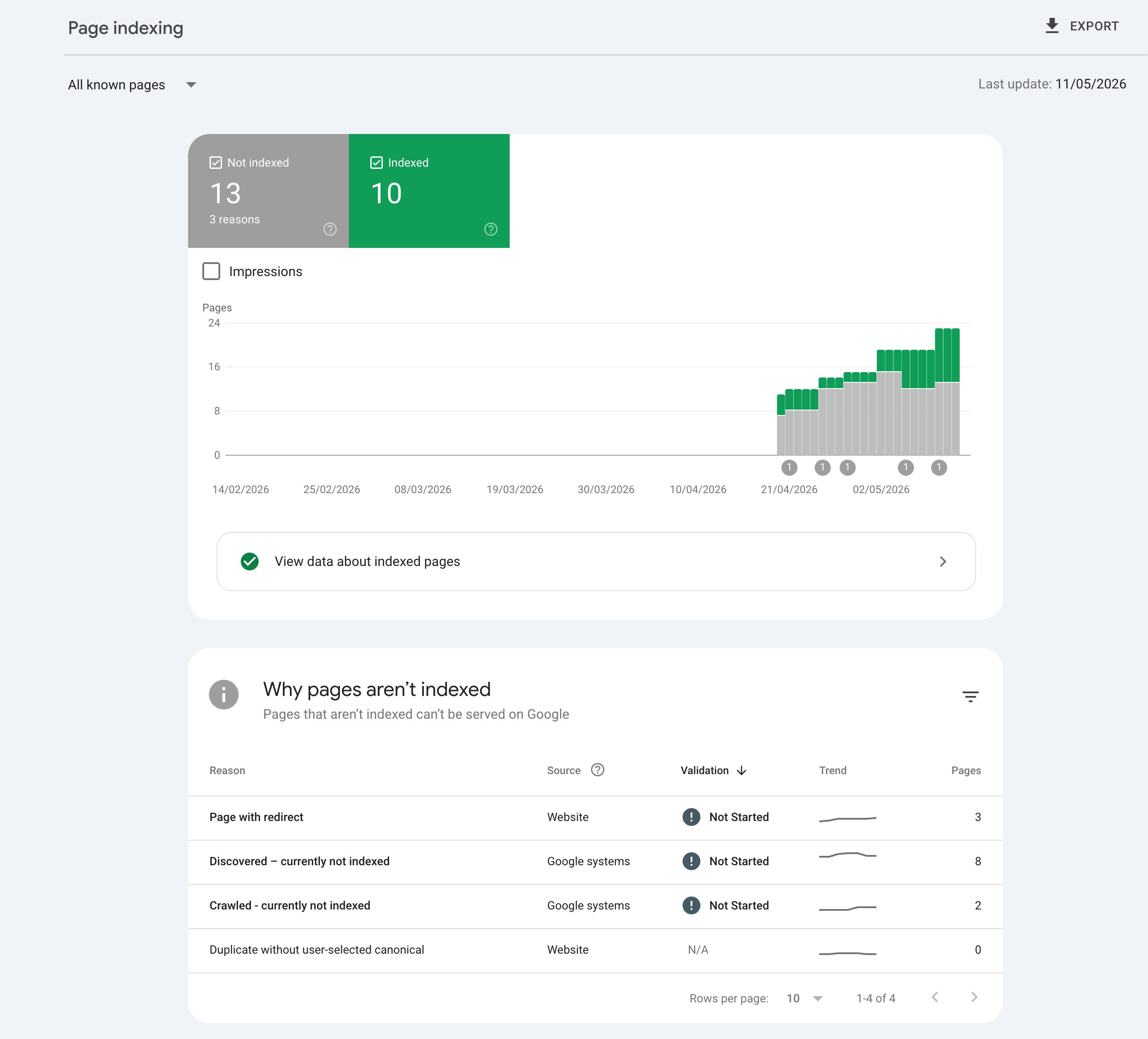

Google Search Console says 10 pages indexed. Up from 7 a week earlier. The three new entries are the alternatives pages.

13 pages aren't indexed yet. GSC categorizes the gap as 8 "discovered but not crawled," 2 "crawled but not yet indexed," and 3 redirect pages from the apex domain. All three buckets are normal for fresh content on a young site.

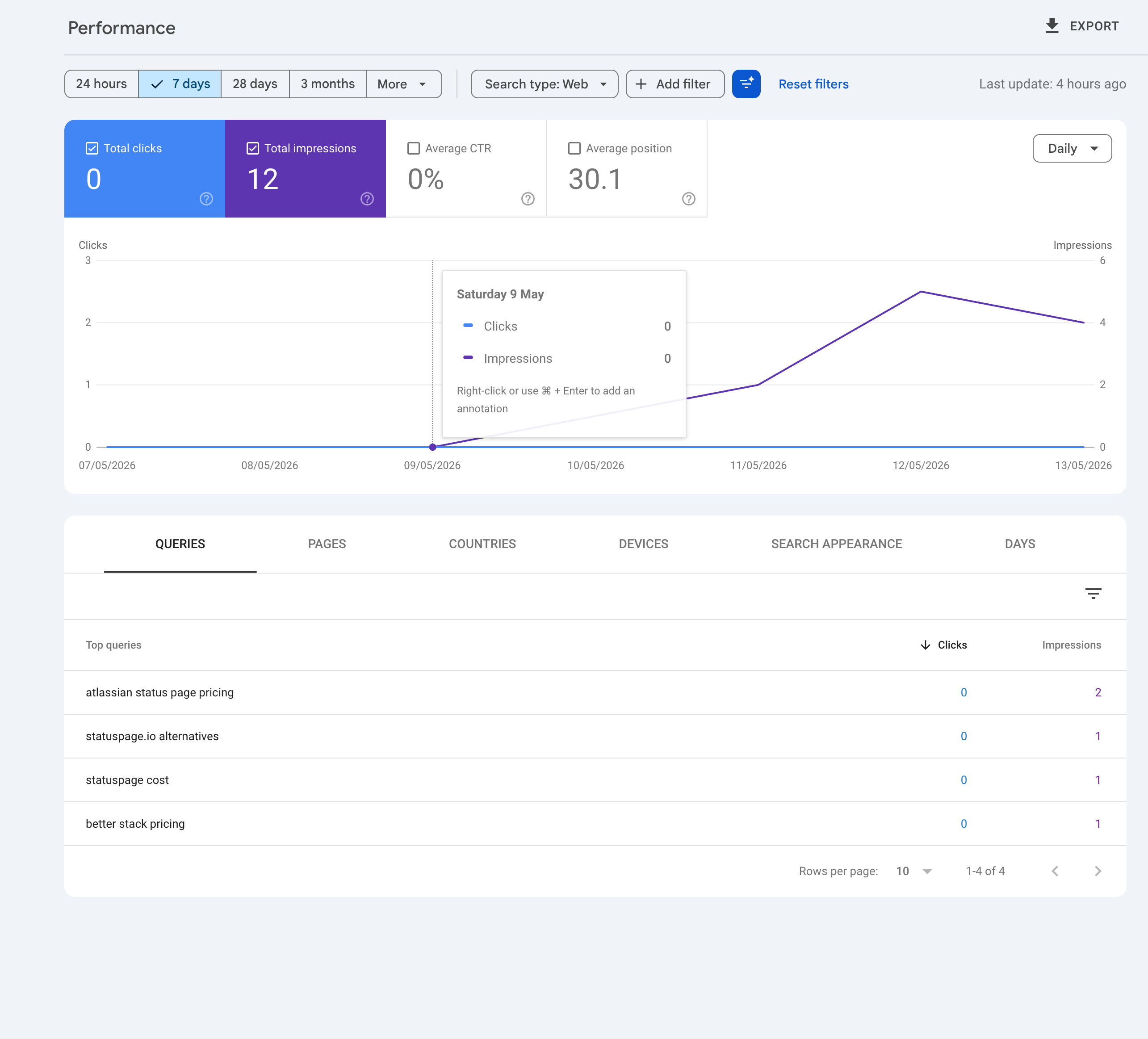

Performance over the last 7 days:

- 12 impressions

- 0 clicks

- 0% CTR

- Average position: 30.1

The interesting part is which queries triggered those impressions:

- "atlassian status page pricing" (2 impressions)

- "statuspage.io alternatives" (1 impression)

- "statuspage cost" (1 impression)

- "better stack pricing" (1 impression)

Every single one of those is a buyer-intent query. Someone typed those words because they were comparing tools to buy. The /alternatives pages were built for exactly that intent, and Google has matched them to those queries.

Position 30 means each impression is on roughly the third page of search results. Almost no one clicks past page 1, so 0 clicks at position 30 is mechanical, not a content problem. The work in the next 4 to 12 weeks isn't writing better pages. It's accumulating the page authority signals (backlinks, dwell time, click-through on the impressions I'm already getting) that move a page from position 30 toward position 10.

Why the gap matters

ChatGPT and Google both index. Only Google ranks.

That's the whole insight, but it's worth saying once.

ChatGPT (and Claude, and Perplexity, and the AI Overviews layer on Google itself) retrieves content based on whether it answers the question. Similarity match, snippet quality check, into the candidate pool. There's no version of "this page is 14 years older than yours, so it goes first." Authority barely exists in the retrieval layer.

Google's organic ranking has the same similarity match, but layered on top is a ranking step that compounds over months and years: page authority, domain authority, external backlinks, CTR feedback on the SERP, dwell time, content depth versus the rest of the topic. A page that lands at position 30 on day 4 is being told "the content is relevant, but you haven't earned position 10." The earning is mostly time.

For an indie founder, two implications.

If you only watch Google Search Console clicks in week 1, you'll declare your SEO strategy dead. It isn't. The Google trajectory is 4 to 12 weeks for fresh content on competitive keywords, not 4 to 12 days.

If you only watch your AI search mentions, you'll get a flattering early signal that doesn't pay rent. ChatGPT-style retrievers don't drive a lot of click traffic yet, and the hallucination problem means you can't trust your own measurement. They're a leading indicator, not a revenue source.

Same content, two clocks. Read them differently.

What I did between the two snapshots

A few small things that matter, and a list of things I deliberately didn't do.

Did:

- Added 5 inline links from the homepage body pointing into the alternatives section (Statuspage.io and Better Stack get linked where their names already appear in the body copy; the other two pages will get inline links as the homepage copy evolves). Added an "Alternatives" entry in the top nav. Internal link authority pointing into fresh pages helps Google decide to crawl and judge them faster.

- Fixed a canonical issue. The apex domain (statuspagebuddy.com) was redirecting to www with a 307 status, not a 301. Google reads 307 as "this is temporary, keep both URLs in mind." Fixed it to 308 Permanent and added metadataBase to the Next.js layout so every page emits an explicit canonical tag pointing at the www origin.

Did not:

- Resubmit any of the alternatives URLs to Google after the first submission. Each resubmission resets the indexing queue.

- Edit the content of any alternatives page after publishing. Edits reset the evaluation window. The current trajectory is the trajectory.

- Ship more alternatives pages this week. Dropping many similar pages on a young site triggers Google's site-wide quality assessment in the wrong direction.

The annoying thing about indie SEO is that the most effective lever in the early window is patience.

What this means for you

If you're shipping SEO content and your week-1 clicks are zero, that's the expected outcome. Watch position trajectory, not click count. A page moving from position 50 to position 25 in four weeks is winning even at zero clicks.

Run a parallel AI search check on your content. It's free and the feedback loop is faster. Just don't confuse "ChatGPT named us" with "we have customers." You can't book revenue from a hallucination-prone retrieval layer.

Most importantly: write the asset, then leave it alone. The single biggest mistake I see indie founders make is shipping a page, panicking at the data 5 days later, then editing it to "fix" what's working as expected. The compounding only happens if you let it compound.

The four alternatives pages are at statuspagebuddy.com/alternatives. They won't rank on Google for weeks. ChatGPT has read them, and so will whoever types these buyer queries next.

Reply with whatever your week-1 SEO data looked like, or just lurk. Both work.

— Hao

Building StatusPageBuddy